Artificial intelligence is no longer an emerging technology waiting at the periphery of society. It is embedded in hiring systems, financial services, predictive policing, healthcare diagnostics, education platforms, military applications, and even democratic processes. What was once experimental has now become infrastructural. The question is no longer whether AI should be regulated, but how — and how urgently.

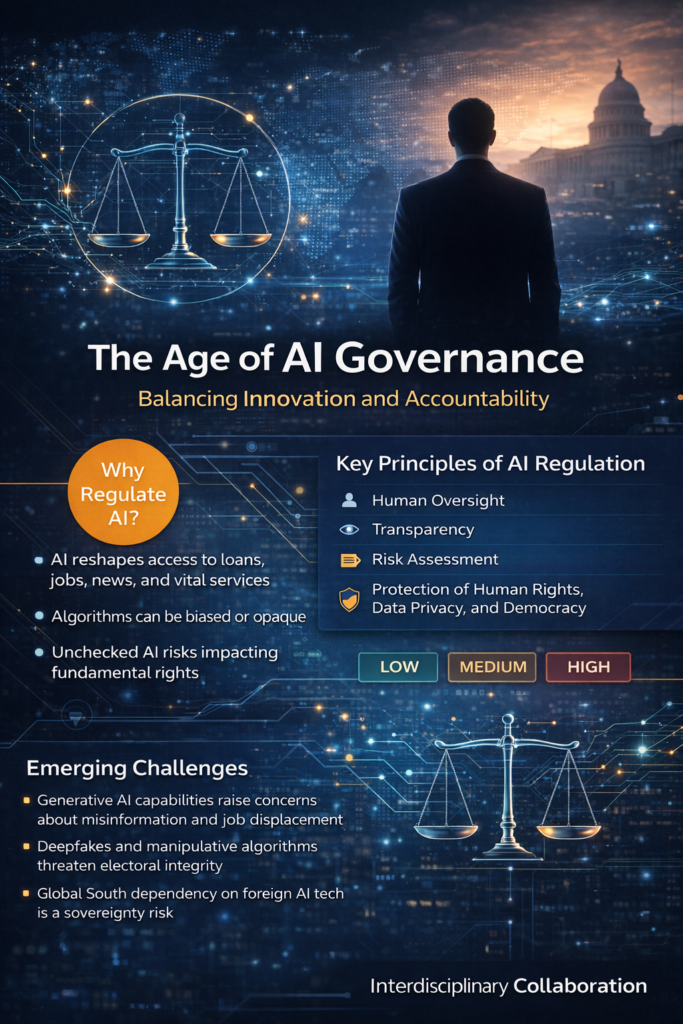

Across the world, governments are grappling with the reality that technological acceleration has outpaced institutional preparedness. Algorithmic systems influence who gets a loan, who is flagged as suspicious, which medical treatment is recommended, and what information individuals see online. These systems operate at scale, often invisibly, and sometimes without clear accountability mechanisms. When bias, opacity, or error enters the system, the consequences are not abstract — they are deeply human.

The emerging challenge is not simply technological but constitutional. AI governance is fundamentally about power: who designs systems, who deploys them, who benefits from them, and who bears their risks. In democratic societies, this distribution of power must be aligned with rule of law principles, human rights standards, and procedural fairness. Without deliberate safeguards, AI can silently restructure social hierarchies and institutional decision-making in ways that are difficult to detect and even harder to reverse.

One of the most significant global developments in this space has been the adoption of the European Union AI Act, which introduces a risk-based framework categorizing AI systems according to potential harm. While the Act represents a major milestone, it also highlights a broader global tension: how to balance innovation with accountability. Overregulation risks stifling beneficial research and entrepreneurship; underregulation risks systemic harm and erosion of public trust. The equilibrium is delicate, but necessary.

Meanwhile, rapid advances in generative models have intensified regulatory urgency. The public release of systems like ChatGPT marked a turning point in mainstream awareness of AI capabilities. These systems can generate text, code, legal drafts, academic essays, and policy briefs in seconds. Yet alongside their promise come concerns regarding misinformation, intellectual property, data privacy, and labor displacement. Generative AI has democratized access to powerful tools — but democratization without guardrails can magnify vulnerabilities.

Emerging debates now focus on algorithmic audits, transparency obligations, explainability standards, and mandatory human oversight. Pre-deployment impact assessments — particularly for high-risk systems in law enforcement, border control, healthcare, and critical infrastructure — are increasingly viewed as essential. Governance is moving from reactive correction toward anticipatory regulation. This shift reflects a growing recognition that once large-scale harm occurs, remediation is costly and trust is difficult to restore.

Another transformative issue is the intersection between AI and democracy. Deepfakes, automated persuasion systems, and micro-targeted political messaging have altered the information ecosystem. The integrity of elections and public discourse is no longer solely a media concern; it is a computational governance issue. Regulatory frameworks must therefore integrate digital rights protections with cybersecurity, data protection, and electoral safeguards.

At the same time, the Global South faces unique governance dilemmas. Many developing countries adopt AI systems built elsewhere, often without access to source code, training data transparency, or meaningful auditing rights. This asymmetry risks creating a form of digital dependency, where algorithmic infrastructure becomes externally controlled. Ethical AI, therefore, is not only about fairness and accountability — it is also about sovereignty and self-determination in technological development.

Responsible AI is ultimately about institutional design. It requires interdisciplinary collaboration among technologists, legal scholars, ethicists, policymakers, and civil society actors. It demands that procurement policies integrate ethical criteria. It requires that universities embed ethics into computer science curricula. It calls upon companies to treat compliance not as a checkbox exercise but as a strategic investment in long-term legitimacy.

The future of AI governance will not be shaped by a single regulation or jurisdiction. It will emerge through dialogue, comparative policy experimentation, and multilateral cooperation. International bodies, regional alliances, and cross-border research networks must work collectively to prevent regulatory fragmentation while respecting cultural and constitutional diversity.

The path forward is neither technophobic nor techno-utopian. It is pragmatic. Artificial intelligence is here to stay, and its transformative capacity is undeniable. The task before us is to ensure that innovation advances human dignity rather than undermining it. Ethical stewardship is not an obstacle to progress; it is the foundation upon which sustainable technological progress must rest.

In this defining decade, governance will determine whether AI becomes a tool of empowerment or a mechanism of silent exclusion. The responsibility lies not only with developers and lawmakers, but with all institutions shaping the digital future.

No responses yet