War has always evolved alongside technology. From gunpowder to nuclear deterrence, each technological leap reshaped not only military strategy but global political order. Today, artificial intelligence is ushering in another transformation — one that may fundamentally redefine how wars are fought, controlled, and morally justified.

Autonomous weapons systems, often referred to as lethal autonomous weapon systems (LAWS), are no longer science fiction. These are systems capable of identifying, selecting, and engaging targets with varying degrees of human intervention. Unlike traditional weapons, their decision-making processes are partially delegated to algorithms. That shift — from human judgment to machine evaluation — is what makes this moment historically significant.

The global debate intensified as rapid advances in AI capabilities demonstrated how machines can process battlefield data at speeds far beyond human cognition. Facial recognition, swarm intelligence, autonomous drones, and predictive targeting systems are increasingly being integrated into defence infrastructures. While automation promises tactical precision and reduced risk to soldiers, it also introduces unprecedented ethical and legal questions.

The United Nations has been actively discussing regulatory approaches under the framework of the United Nations Convention on Certain Conventional Weapons (CCW). However, consensus remains elusive. Some states advocate for a complete ban on fully autonomous lethal systems, while others support regulation that preserves what they term “meaningful human control.” The absence of binding international law creates a regulatory vacuum at a time when technological acceleration is rapid.

One emerging concern is algorithmic unpredictability. AI systems learn from data, adapt to environments, and sometimes behave in ways not explicitly programmed by their developers. In civilian settings, such unpredictability may lead to bias or system error. In military contexts, the consequences could be catastrophic — accidental escalation, misidentification of civilians, or unintended cross-border conflict. The speed of autonomous response systems may compress decision-making timelines, reducing opportunities for diplomatic de-escalation.

Another critical dimension is accountability. If an autonomous drone makes an unlawful strike, who is responsible? The programmer? The commander? The manufacturer? The state deploying it? International humanitarian law was developed on the assumption that human actors make decisions. The introduction of machine autonomy complicates the attribution of responsibility and challenges existing legal doctrines of command responsibility and proportionality.

Moreover, the integration of AI into defence systems risks triggering a new arms race. Major powers are investing heavily in military AI research. Unlike nuclear weapons, AI systems rely largely on software, making proliferation easier and detection harder. Smaller states and non-state actors may eventually gain access to autonomous capabilities through commercially available technologies adapted for military use. The democratization of dual-use AI tools increases strategic instability.

Yet the conversation cannot be reduced to fear. Proponents argue that properly designed autonomous systems could reduce collateral damage by improving precision and minimizing emotional decision-making under stress. Machine-assisted targeting, when combined with strict human oversight, may enhance compliance with international humanitarian law. The question, therefore, is not whether AI will be used in defence — it already is — but how governance frameworks can shape its responsible deployment.

A growing school of thought emphasizes “human-in-the-loop” or “human-on-the-loop” architectures. These models require meaningful human supervision over critical targeting decisions. However, defining what qualifies as “meaningful” remains contested. Is it real-time override capability? Is it pre-programming constraints? Is it post-strike review? Without clarity, the term risks becoming rhetorical rather than regulatory.

Cyber vulnerabilities add another layer of complexity. Autonomous defence systems are software-dependent and therefore susceptible to hacking, spoofing, and adversarial attacks. A compromised autonomous weapons system could be manipulated to act against its operator’s interests. Thus, AI safety in defence is inseparable from cybersecurity resilience.

The ethical stakes are profound. Delegating life-and-death decisions to algorithms raises philosophical questions about human dignity and moral agency. Warfare has always involved tragedy, but it has also involved human deliberation. When machines select targets, does it erode the moral weight traditionally borne by human combatants? Or does it simply reflect the next stage of technological evolution in conflict?

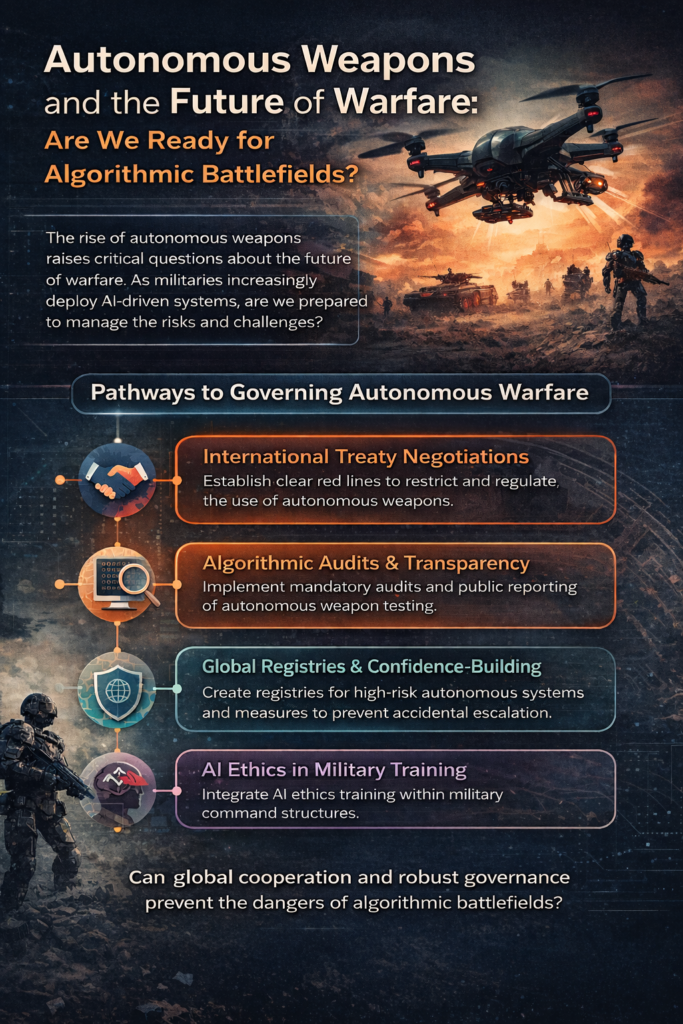

Emerging governance models suggest several pathways forward:

- International treaty negotiations establishing clear red lines

- Mandatory algorithmic audits and weapons testing transparency

- Global registries for high-risk autonomous military systems

- Confidence-building measures to prevent accidental escalation

- Integration of AI ethics training within military command structures

The urgency of these conversations cannot be overstated. Unlike previous military revolutions, AI evolves at software speed. Capabilities can scale globally in months rather than decades. Governance mechanisms, however, often move slowly. Bridging that gap is one of the defining security challenges of the 21st century.

The future battlefield may not be dominated by soldiers alone, but by networks of autonomous systems interacting at machine speed. Whether this transformation enhances security or destabilizes global order depends on decisions being made today — in research labs, defence ministries, and international negotiating tables.

Technology does not determine destiny. Policy does. The question is not whether AI will shape warfare — it already is — but whether humanity will shape the rules governing its use before algorithmic battlefields become the norm.

No responses yet